We evaluate whether Clarity can surface concealed materials, subtle anomalies, and difficult signatures conventional sensing workflows may miss.

Evaluations can be judged on whether hyperspectral detection improves signal quality and reduces analyst noise relative to the incumbent workflow.

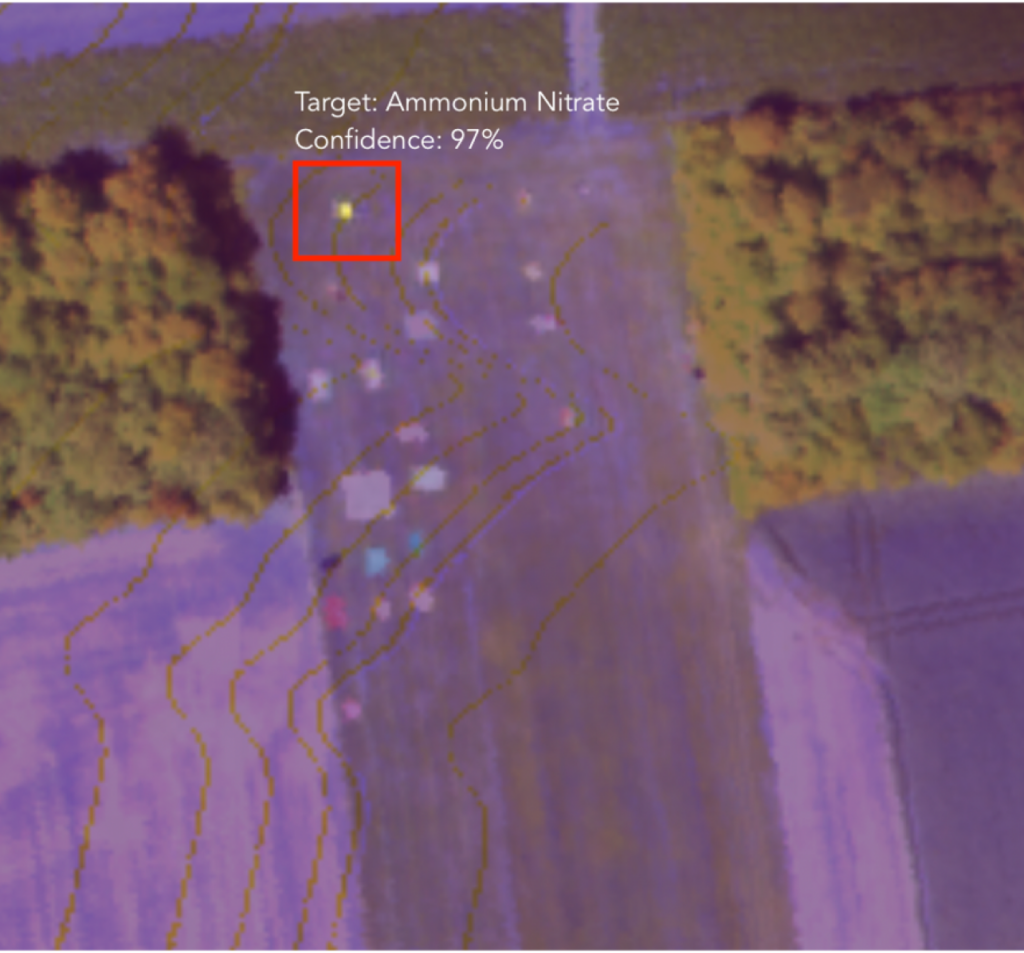

Detections are paired with spectral evidence, confidence, and mission-ready context your team can inspect and defend.

Why Clarity

1Spectral Analysis Built for Difficult Environments

Clarity’s spectral models are designed for target detection and anomaly analysis in environments where conventional imagery has limited signal quality.

2Reduced False Positives

Advanced spectral detection methods dramatically reduce false alarms compared to traditional anomaly detection approaches.

3Operational Integration

Deploy hyperspectral intelligence within existing geospatial analysis pipelines and mission workflows.

Example output: a customer-safe anomaly map or detection layer with spectral evidence, analyst review context, and a mission summary matched to the operational decision in scope.

Request a Customer-Safe ExampleMission Scoping Process

From threat definition to rapid operational proof-of-value.

1. Define Target Signature

Specify the material composition or anomaly signature of interest.

2. Secure Data Assessment

Evaluate data sources and operational constraints.

3. Rapid Detection Prototype

Deploy spectral detection models and produce operational intelligence outputs.

Questions Buyers Ask Before They Scope an Evaluation

What data sources do you support?

Evaluations are scoped around hyperspectral satellite, airborne, drone, and customer-provided imagery, including public sensors such as PRISMA, EnMAP, and EMIT where relevant to the mission and workflow.

What does an evaluation deliver?

A package with an anomaly map or detection layer, analyst-ready context, spectral evidence, and a next-step recommendation tied to the mission objective.

How is success judged?

Success can include rare or hidden target detection, false-positive reduction, analyst time saved, decision usefulness, output quality, and fit with the mission review workflow.

What is the minimum dataset needed?

That depends on the target and environment, but we can usually define a practical starting scope around a representative mission dataset.

Can this fit our existing workflow?

Yes. Evaluations are scoped around the review process, security constraints, and decision workflow your team already needs to support.