The Engine Powering

Material Intelligence.

Efficient data processing, deployable inference, and explainable analysis for high-dimensional spectral and geospatial workflows.

What technical evaluators can review.

The public technical story should make the evaluation path clear: what goes in, what comes out, how results stay reviewable, and how teams continue into documentation or a deeper technical discussion.

Inputs

Hyperspectral satellite, airborne, drone, industrial, and customer-provided data relevant to the workflow in scope.

Outputs

Target maps, class layers, crop-stress outputs, plume packages, and analyst-ready summaries matched to the use case.

Explainability

Spectral evidence, confidence context, and review cues that help teams inspect and defend how a result was produced.

Deployment and Docs

Cloud and edge deployment options, documentation, and a direct path to technical contact when a deeper review is needed.

The Paradigm Shift

Computer vision taught machines to see.

Material intelligence teaches them what things are made of.

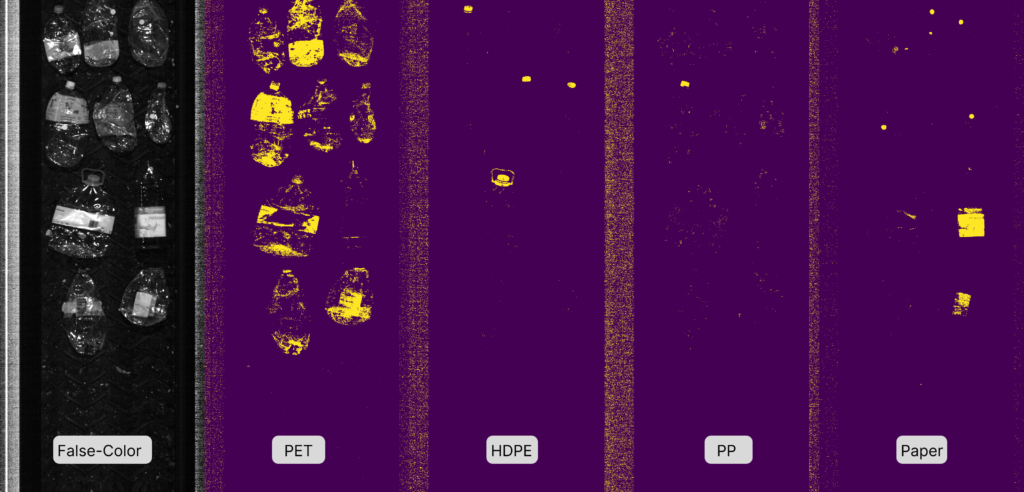

For decades, automated systems have relied on visual detection—RGB and Near-Infrared sensors that understand shape, size, and color. But visual data is fundamentally limited. Clarity processes hundreds of spectral bands per pixel, analyzing the fundamental chemical signature of matter. It doesn't just see a black object; it knows it is High-Density Polyethylene. It doesn't just see a green field; it knows the biochemical stress level of the crops.

Explainable Analysis for Operational Teams

Operator Interface

Complex spectral analysis should be accessible to domain experts. Clarity helps teams move from data review to model-driven outputs through guided workflows built for operational use.

Explainable AI (XAI)

Clarity surfaces the spectral evidence, confidence, and analytical path behind each result so teams can inspect, compare, and defend decisions.

Spectral Unmixing for Operational Analysis

Proprietary Tech Stack

At the core of Clarity are deep learning architectures optimized for high-dimensional data, including workflows tailored to spectral unmixing, classification, and target detection.

Single-Signature Training

Traditional models require massive amounts of "in-the-wild" training data to learn a target. Our platform uses synthetic data generation to train targeting models from limited examples, including a single spectral signature in difficult environments where labeled data is scarce.

High-Speed Infrastructure

Built to handle the massive compute requirements of spectral data cubes.

Lossless Compression

Streaming hyperspectral data requires massive bandwidth. We implement CCSDS-123-b-2for near-lossless compression in spectral data workflows.

Hardware Acceleration

Real-time analytics require immense localized processing. We accelerate both edge and cloud pipelines to match operational velocities.